Votal AI today announced the launch of its RLHF-trained adversarial attacker model along with a community-driven open-source Attack Catalog, marking a significant advancement in securing agentic AI systems.

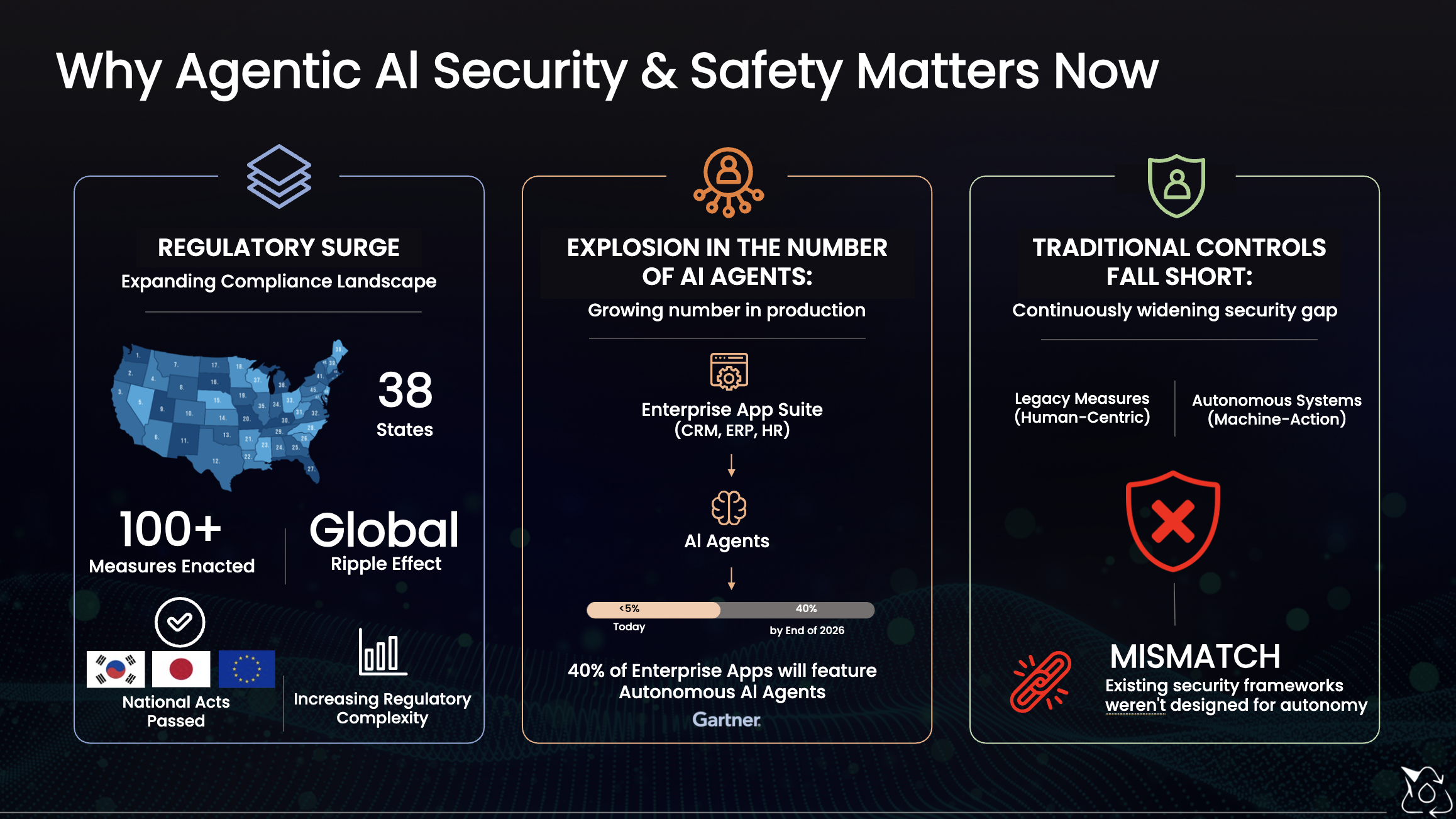

As enterprises increasingly adopt autonomous AI agents capable of executing tasks, interacting with tools, and making decisions, the attack surface has expanded dramatically. Traditional, point-in-time security testing methods are no longer sufficient to address the dynamic and evolving risks associated with these systems.

To address this challenge, Votal AI’s new attacker model leverages Reinforcement Learning from Human Feedback (RLHF) to simulate real-world adversarial behavior. The model continuously learns from attack outcomes, enabling it to identify complex, multi-stage vulnerabilities across the entire agentic AI lifecycle—including prompt injection, memory poisoning, privilege escalation, tool misuse, and data exfiltration.

In parallel, Votal AI has introduced an open-source Attack Catalog, providing a transparent and continuously evolving library of adversarial techniques. This initiative enables organizations and the broader security community to collaborate, standardize testing practices, and stay ahead of emerging AI threats.

These innovations are part of Votal AI’s Continuous Agentic Red Teaming (CART) platform, which delivers automated, continuous security testing for AI systems. CART helps enterprises proactively detect vulnerabilities, validate security controls, and maintain resilience as AI systems evolve.

Votal AI will showcase these capabilities at the upcoming RSA Conference 2026 in San Francisco, where attendees can experience live demonstrations of real-world AI attack simulations and continuous red teaming in action.

With this launch, Votal AI continues to advance its mission of enabling organizations to build, deploy, and scale secure and trustworthy AI systems.